The Last Syntax

How AI-assisted programming is (not) just another step in human-machine communication

by Hans-Peter Schmidt

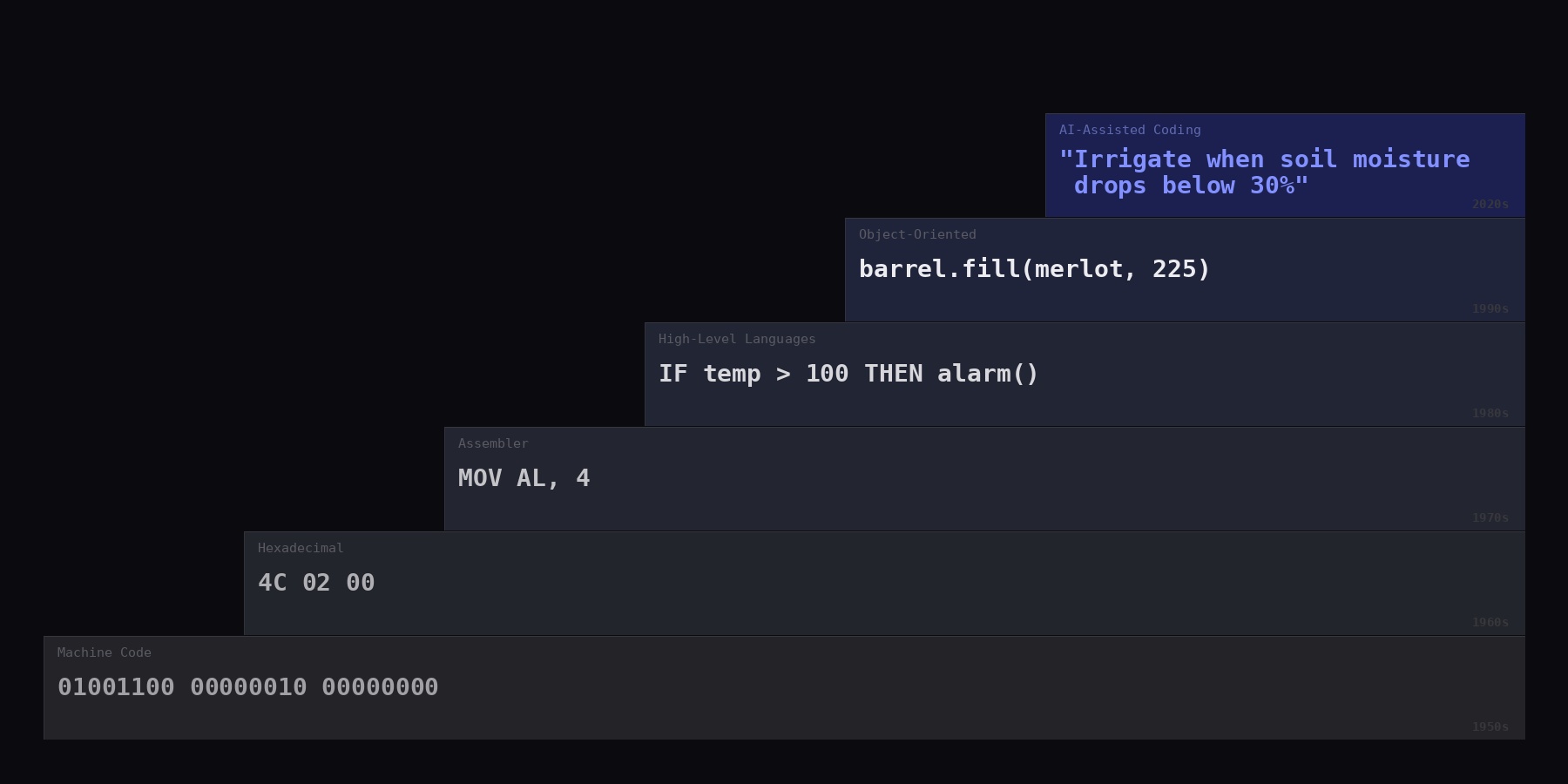

When the first programmers sat down at their machines in the 1950s, they wrote numbers. Not equations, not instructions in any human language — numbers. A sequence like 10110000 00000100 told the processor to move a value into a register. Another sequence told it to add. A third told it to jump to a different location in memory. Every operation, every memory address, every conditional branch had to be encoded as a binary pattern that the processor could execute directly. A single misplaced bit — a 0 where a 1 should have been — could crash the entire machine or, worse, produce silently wrong results that no one noticed until the damage was done.

This was programming in machine code. It was exact, it was powerful, and it was brutal. Writing a program that did something as mundane as sorting a list of names required the programmer to think like the processor: which register holds what, where in memory does the next instruction sit, how many bytes to jump forward if a comparison fails. The mental effort was not spent on the problem to be solved but on the mechanical details of how the machine digested instructions.

From machine code to hexadecimals and assembler

The first small mercy was hexadecimal notation. Instead of writing out long binary strings, programmers could use base-16 numbers — 0 through 9, then A through F — to represent the same instructions more compactly. A binary 1001010 would be 4A in hexadecimal. On a Commodore or a Z80 system in the late 1970s, a programmer who wanted to jump to a specific memory address did not type 01001100; they typed 4C, followed by the two-byte address. A reference card taped to the desk translated between the hexadecimal codes and their meaning: 4C = jump, A9 = load a value, 8D = store it. The machine still received binary code as instruction. But the human interface had shifted from using ones and zeros (for no and yes) to something marginally less hostile though still nerdy. It was the first step on a staircase from binary decisions to a more human-like language to steer a machine.

Then came assembler. Instead of writing 10110000 00000100 (or B004 in hexadecimal), a programmer could now write MOV AL, 4 — move the value 4 into a small storage slot called AL inside the processor. The instruction was identical to the binary and hexadecimal version, but now a human could read it. A translation program called an assembler converted the mnemonic back into the binary the processor needed to steer the machine. The abstraction was minimal. Each line of assembler still corresponded to exactly one machine instruction. But the difference was enormous: the programmer could now read what the code was supposed to do without first decoding hexadecimal patterns in their head.

The reaction from experienced machine code programmers was predictable. Adding a translation layer between the programmer and the hardware meant giving up direct control. The assembler may have introduced inefficiencies because it required the translation step. It obscured what was really happening. Those objections from programming purists were understandable — they came from professionals who had mastered a difficult craft and who felt that something was being taken away from them. What they did not see, or did not want to see, was that what they gained in return - readability, speed of development, fewer errors - far outweighed the loss.

Edsger Dijkstra, the Dutch computer scientist who would later receive the Turing Award, was among the first to articulate what was happening in theoretical terms. In his 1972 "Notes on Structured Programming," he argued that the purpose of abstraction is not to be vague, but to create a new semantic level in which one can be absolutely precise (Dijkstra, 1972). Each new programming language was not a retreat from precision — it was the creation of a new level at which precision could be exercised more effectively. Kim (2024) demonstrated this principle by tracing how a single operation — adding 1 + 1 — is expressed at each level of abstraction, from x86 assembly through C, Java, and Python, all the way to a natural-language prompt to a large language model. The operation is identical; only the interface changes.

The next step to higher-level programming languages

Fortran, Basic, and Pascal, which appeared between 1957 and 1970, allowed programmers to write commands such as “IF temperature > 100 THEN activate_alarm” instead of juggling registers and jump addresses. A single line of Pascal might compile into dozens of machine instructions, but the programmer no longer needed to know or care about any of them. The compiler (i.e., the language program) handled the translation. Pascal, designed in 1970 by Niklaus Wirth at ETH Zurich, was explicitly intended as a teaching language — a tool to make structured programming accessible to students who were not hardware specialists (Wirth, 1971). Wirth received the Turing Award in 1984 for "developing a sequence of innovative computer languages" and spent his career demonstrating that elegance and simplicity were not concessions to beginners but engineering virtues in their own right (Wirth, 1995). But here again, skeptics warned that the resulting code would be slower, less elegant, less controlled. However, within a decade, virtually no one outside of hardware engineering and embedded systems wrote assembler anymore.

Object-oriented languages like Java, Python, and C# added yet another layer. Instead of writing a program as a long sequence of instructions, the programmer could now define "objects" that mirrored things in the real world. A winery management program, for example, would contain an object called "barrel" that carried its own data — volume, grape variety, date filled, cellar position — and its own behaviors: barrel.fill(), barrel.rack(), barrel.sample(). The programmer no longer needed to remember which variable held which barrel's data or write separate instructions to manipulate each one. The object knew what it was and what it could do. The loops, the memory management, the interaction with the operating system still existed underneath, but the programmer operated at the level of meaning rather than mechanism.

Programming moved closer to human language. The translation into machine code, meanwhile, became more complex — but that complexity was now the machine's burden, not the programmer's. Purist coders feared losing authority, and to some it felt like handing over the steering wheel to the compiler. By now it had already become clear that at every step of computer language transitions, a new layer of translation was inserted between the programmer's intent and the machine's execution. The programmer moved upward, closer to human thinking, further from binary logic. The tooling absorbed the layers below. And at every step, the programmers who had mastered the previous level feared that their expertise was being devalued.

Fred Brooks, who managed the development of IBM's System/360 and its operating system in the 1960s, analyzed this dynamic in his influential 1986 essay "No Silver Bullet." Brooks distinguished between accidental complexity — the mechanical difficulty of expressing a solution in a particular language or on a particular machine — and essential complexity — the inherent difficulty of the problem itself. He argued that high-level languages were the single largest productivity gain in the history of software, precisely because they removed accidental complexity and let programmers focus on the essential (Brooks, 1986). Once accidental complexity is stripped away — and we will see how radically the latest step does this — what remains is the hard part: understanding the problem, designing the architecture, getting the logic right.

Purist coders were partly right though. The specific skill of writing correct assembler lost market value. But the deeper expertise — understanding how systems work, reasoning about correctness, decomposing complex problems, anticipating what can go wrong — not only survived each transition but became more valuable. The programmer who understood assembler wrote better Pascal, because they understood what the compiler was doing underneath. The programmer who understood data structures and algorithms wrote better Python, because they could reason about performance when it mattered. Each new abstraction layer did not replace knowledge. It shifted the level at which knowledge was applied.

Who gets to code

There is a second consequence of this history that is less often discussed but arguably more significant. Each step in the evolution of programming languages did not just change how programmers worked — it changed how many people could program at all.

Machine code was the domain of a tiny priesthood. In the 1950s, perhaps a few thousand people worldwide could write it. The intellectual prerequisites were severe: one needed to understand processor architecture, binary arithmetic, memory layout, and electrical engineering, often simultaneously. Assembler lowered the threshold only slightly — the circle widened, but it remained a highly specialized craft. Higher-level languages changed this substantially. A scientist who needed to solve differential equations could learn Fortran without first understanding how a processor's instruction pipeline worked. An accountant could write a Basic program to manage inventory without knowing what a register was. The number of people who could instruct a computer grew by orders of magnitude.

The democratization of programming was not an accidental side effect — it was an explicit design goal. In 1964, John Kemeny and Thomas Kurtz at Dartmouth College created BASIC (Beginner's All-purpose Symbolic Instruction Code) specifically so that students in the humanities, social sciences, and arts could use computers. Kemeny later said that their vision was that every student on campus should have access to a computer, and any faculty member should be able to use one in the classroom (Kemeny & Kurtz, 1985). They made the compiler freely available. BASIC became the lingua franca of the microcomputer revolution in the 1970s and 1980s, and an entire generation of programmers wrote their first lines of code in it — including several who went on to found major technology companies. BASIC wasn't just education, it was the incubator of an industry.

Annette Vee, in her book Coding Literacy (2017), traces this arc explicitly, arguing that programming has followed the same trajectory as reading and writing: from a specialist skill reserved for a trained elite to a broadly accessible form of literacy. Python took this further still. Its syntax reads almost like pseudocode. University students in biology, economics, or linguistics — people with no formal training in computer science — began writing programs to analyze data, automate experiments, and build models. The fact that Python became the most widely taught programming language in the world is not because it is the most powerful. It is because it is the most accessible. Each rung on the abstraction staircase brought more people into the room.

The latest step: AI-assisted coding

The latest generation of programming tools — Claude Code by Anthropic, Codex by OpenAI, and others — adds another translation layer on top of the existing stack. The programmer describes his intention in plain language. Not in the syntax of Python or Java, but in English, or French, or Hindi. The tool generates the code. The person who wrote the commands reviews the result, tests it, integrates it into the larger IT system. There are still compilers, operating systems, and processors underneath. Nothing has been removed. A new layer has simply been added on top — one that converts commands given in human language into source code, just as compilers convert source code into machine instructions and assemblers convert mnemonics into binary.

The programming language used in Claude Code may be natural, but the thinking behind it must be precise. A vague instruction produces vague code, just as a vague specification produced a bad program in any previous era. The user must learn to formulate intent clearly, to break complex goals into manageable steps, to verify the result and refine the instruction when it falls short. There is a craft to this, and it improves with practice. The human trains the tool by learning what it responds to; the tool trains the human by revealing where the instruction was ambiguous. It is a new skill. It may look easy because bad commands still lead to a result while bad Python coding leads to crashes, but to reach truly excellent results, using language to instruct a machine is an expertise in its own right.

When the interface between human and machine becomes natural language, a syntactic barrier falls. A vineyard manager who has never written a line of code but who understands exactly how the irrigation system should respond to soil moisture data can now describe that logic and obtain a working program. A schoolteacher who wants to build a quiz application for her class does not need to learn JavaScript first. A ski designer who wants to calculate the influence of the inner layup of materials on the ski's behavior on snow can now do it by himself in a week or two instead of employing specialists for a year or more. The staircase of abstraction in programming languages has always been, simultaneously, a staircase of democratization. Each step made the machine accessible to more people, not fewer. Each step was resisted by those who were already inside the room — and each step, in the end, created more work, not less, because the number of problems that could be addressed with software expanded faster than the number of people who could address them.

The discomfort this provokes is also not new. There is, however, an additional psychological dimension that deserves attention. Using a compiler or a framework still feels like programming, nerdy and professional. Using natural language to generate code can feel like something else entirely — like delegating the thinking itself, not just the mechanical translation. It can feel like cheating. But anyone who has spent an afternoon wrestling with an AI tool to produce correct, well-structured code for a non-trivial problem knows that the effort has not disappeared. It has moved — from syntax to precision of thought.

The boundary between "thinking" and "mechanical translation" has shifted at every stage of this history. When a Pascal programmer wrote a FOR loop instead of manually setting up a counter register, a comparison instruction, and a conditional jump, they were also delegating thought to the machine. When a Python programmer solves a complex integral by typing a single line — scipy.integrate.quad(f, 0, inf) — instead of working through the calculus step by step, they are relying on someone else's solution to a problem that once required considerable intellectual effort and a sharp pencil. Each of these steps felt, at the time, like it crossed a line. Each of them, in retrospect, simply moved the line.

Democratization of the Scientific Method

There is another dimension to this shift that may prove more consequential than the change in language itself. Traditional programming was slow. Testing a hypothesis computationally — say, whether a particular formula correctly predicts how forces distribute through a bent ski on an icy slope — required first writing the program to calculate it, which alone could take weeks. If the approach turned out to be wrong, the effort was largely lost. Starting over with a different mathematical model meant writing a different program, which took weeks again. Programmers and scientists learned, out of necessity, to plan exhaustively and commit only to approaches they were reasonably certain would work. This was prudent. Time was the most constrained resource.

AI-assisted coding inverts this. When a working prototype can be generated in minutes and revised in seconds, the marginal cost of being wrong drops toward zero. The programmer — or the vineyard manager, or the ski designer — can try an approach, observe the result, adjust, and try again. This is not trial and error in the pejorative sense. It is the scientific method: hypothesis, experiment, observation, refinement. Before, this cycle took days or weeks per iteration. Now it takes minutes. The consequence is not merely that software gets built faster. It is that experimentation becomes available to people and in settings where the cost of traditional development would have ruled it out entirely. This is not just another step on the staircase of abstraction. It is a new story in the building, an added floor that changes the view from every window.

If we think about what gated the scientific method historically, it was not the logic of it — hypothesis, experiment, observation, refinement — which are intuitive to any curious person. What gated it was the cost of the experiment. You needed a laboratory, equipment, materials, institutional access, and — crucially — enough time and funding to be wrong repeatedly before being right. That's why most scientific progress was, for centuries, the province of universities and well-funded institutions. Singular exceptions like Einstein, who developed special relativity while working as a clerk at the Patentamt in Bern, proved the rule. But imagine what he could have done with computational tools like Claude Code.

What AI tools do in the software domain is collapse the cost of the experiment to near zero. And software is no longer just software — it's the medium through which people model climate systems, analyze genomic data, simulate structural loads, optimize supply chains. When a vineyard manager can formulate a hypothesis about irrigation timing, build a model in an afternoon, test it against real data, and refine it — she is doing science. She just doesn't need a computer science degree or a research grant to do it anymore.

Literature

Brooks FP (1986): No Silver Bullet — Essence and Accident in Software Engineering. Proceedings of the IFIP Tenth World Computing Conference, pp. 1069–76. Reprinted in: The Mythical Man-Month, Anniversary Edition, Addison-Wesley, 1995.

Dijkstra EW (1972): Notes on Structured Programming. In: Structured Programming, Academic Press, London, pp. 1–82.

Kemeny JG & Kurtz TE (1985): Back to BASIC: The History, Corruption, and Future of the Language. Addison-Wesley, Reading, MA.

Kim T (2024): From 1+1 in Assembly to LLMs: The Evolution of Computing Abstraction. Blog post, November 2024. https://taewoon.kim/2024-11-12-1+1/

Vee A (2017): Coding Literacy: How Computer Programming is Changing Writing. MIT Press, Cambridge, MA.

Wirth N (1971): The Programming Language Pascal. Acta Informatica 1(1), pp. 35–63.

Wirth N (1995): A Plea for Lean Software. IEEE Computer 28(2), pp. 64–68.